In general, my research spans computational game theory, online learning, multi-agent reinforcement learning and computational complexity, as well as their applications to agentic AI and economics. The ultimate goal of my research is to develop theoretically principled solutions to algorithmic challenges, drawing inspiration from modern decision-making environments and AI, in order to bridge the gap between real-world application and fundamental computational understanding. Below, I outline the main research pillars of my work:

Game Theory & Online Learning: No-regret learning under imperfect information

In this research pillar, we investigate the convergence properties and structural guarantees of no-regret dynamics within environments characterized by information asymmetry and partial observability. Specifically, I’m particularly interested in studying decentralized online learning in games under imperfect information, where no-regret dynamics translate to computing game-theoretic equilibrium notions—such as Nash equilibria or correlated equilibria—as well as adversarial settings where the learner competes against powerful, best-in-hindsight benchmarks.

Main results

- Learning under combinatorial structure: We provided a kernelization-based algorithmic framework for learning in polyhedral games under imperfect information (i.e., bandit and semi-bandit feedback), which facilitates efficient implementation and sampling for the classical combinatorial bandit GeometricHedge/ComBand algorithm and the celebrated Exp3-IX algorithm (NeurIPS 2025). Our work provides state-of-the-art results for learning coarse correlated equilibria across a wide array of games, such as congestion games and Colonel Blotto. We also managed to provide the first efficiently implementable algorithm for adversarial combinatorial bandits that achieves no swap regret (that is, a strong notion of regret associated with correlated equilibria) with polylogarithmic dependence on the large action size of combinatorial bandits (AISTATS 2026).

The complexity of finding stationary points and equilibria

This research pillar investigates the complexity of fundamental problems in non-convex optimization (OPT) and computational game theory (GT):

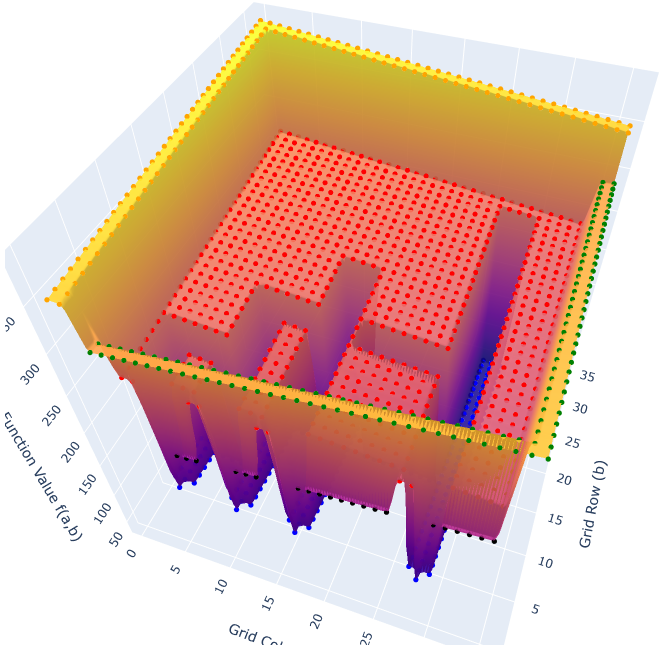

OPT: Our goal is to advance the computational understanding of finding second-order stationary points (SOSPs) in non-convex optimization. Such points are of remarkable interest for the ML/optimization community, since widely used optimizers—including Gradient Descent—can theoretically stuck in first-order stationary points (FOSPs) that may correspond to problematic strict saddle points. Therefore, our main research question is the following:

What is the computational complexity of avoiding strict saddle points both in unconstrained and constrained non-convex optimization?

Our contribution and its significance

We proved that the problem is PLS-complete both in constrained (arXiv 2026) and unconstrained (ICML 2024) optimization; thereby resolving important open questions in the field. Our results imply that unless PLS $\subseteq$ PPAD (which is widely believed to do so), there exists no iterative algorithm with a continuous, efficiently implementable update rule (such as Gradient Descent or Newton’s method) for finding SOSPs! Last but not least, our result in the constrained setting yields the first problem defined in a compact domain to be shown PLS-complete beyond the canonical Real-LocalOpt.

GT: Our goal is to study the computational complexity of finding equilibria in structured games, with a particular interest in Markov games; i.e., the game-theoretic framework capturing the problem of multi-agent reinforcement learning. The ultimate goal here is to identify the computational barriers of computing equilibria in such games and explore structured settings where we can provably escape the PPAD-hardness inherent in general normal-form games.

Agentic AI & Multi-agent reinforcement learning

The main direction of this research pillar is to study how AI agents can effectively coordinate toward shared objectives in complex, fully cooperative environments. In particular, we develop novel algorithmic frameworks based on multi-agent reinforcement learning (MARL). Our ultimate goal is to advance our understanding of the limits of decentralized decision making, as well as to explore agent/state modelling paradigms for minimizing the communication requirements of multi-agent systems, particularly under safety constraints.

Main results

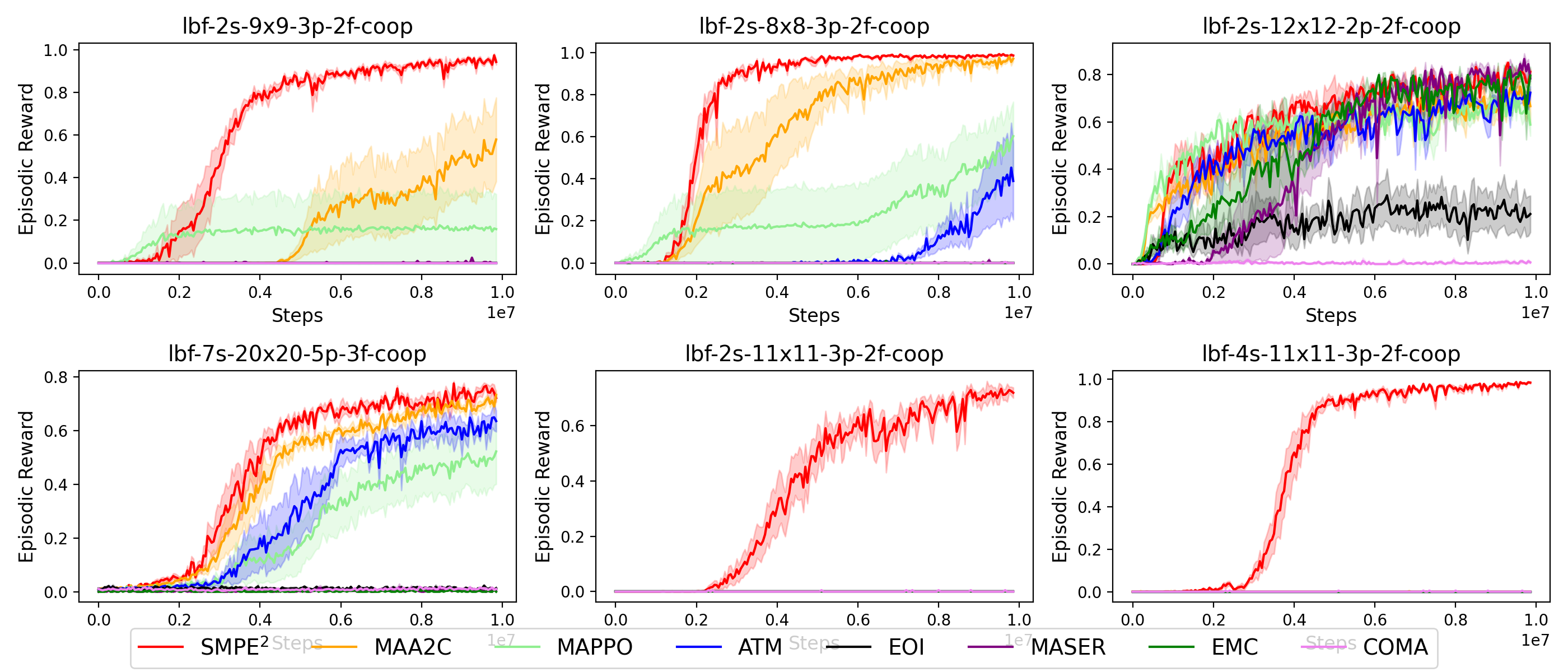

- A novel MARL algorithm, called SMPE, which leverages a novel state modelling framework for enhancing policy optimization and joint exploration in distributed partially observable environments where agents share no communication channels during execution (ICML 2025). Experimentally, we demonstrated that our method outperforms state-of-the-art MARL algorithms in complex fully cooperative tasks from the well-established MPE, LBF, and RWARE benchmarks.

- In (AAMAS 2025), we highlight the crucial need for expanding systematic MARL evaluation across a wider array of benchmarks by showing that many algorithms, hailed as state-of-the-art mostly on the widely used SMAC benchmark, may significantly underperform standard MARL baselines on fully cooperative testbeds.